Companies like Amazon, Microsoft, and Google have proven to us that we can trust them with our personal data. Now it’s time to reward that trust by giving them complete control over our computers, toasters, and cars.

WHAT IS EDGE COMPUTING?

In the beginning, there was One Big Computer. Then, in the Unix era, we learned how to connect to that computer using dumb (not a pejorative) terminals. Next we had personal computers, which was the first time regular people really owned the hardware that did the work.

Right now, in 2018, we’re firmly in the cloud computing era. Many of us still own personal computers, but we mostly use them to access centralized services like Dropbox, Gmail, Office 365, and Slack. Additionally, devices like Amazon Echo, Google Chromecast, and the Apple TV are powered by content and intelligence that’s in the cloud — as opposed to the DVD box set of Little House on the Prairie or CD-ROM copy of Encarta you might’ve enjoyed in the personal computing era.

As centralized as this all sounds, the truly amazing thing about cloud computing is that a seriously large percentage of all companies in the world now rely on the infrastructure, hosting, machine learning, and compute power of a very select few cloud providers: Amazon, Microsoft, Google, and IBM.

Amazon, the largest by far of these “public cloud” providers (as opposed to the “private clouds” that companies like Apple, Facebook, and Dropbox host themselves) had 47 percent of the market in 2017.

The advent of edge computing as a buzzword you should perhaps pay attention to is the realization by these companies that there isn’t much growth left in the cloud space. Almost everything that can be centralized has been centralized. Most of the new opportunities for the “cloud” lie at the “edge.”

So, what is edge?

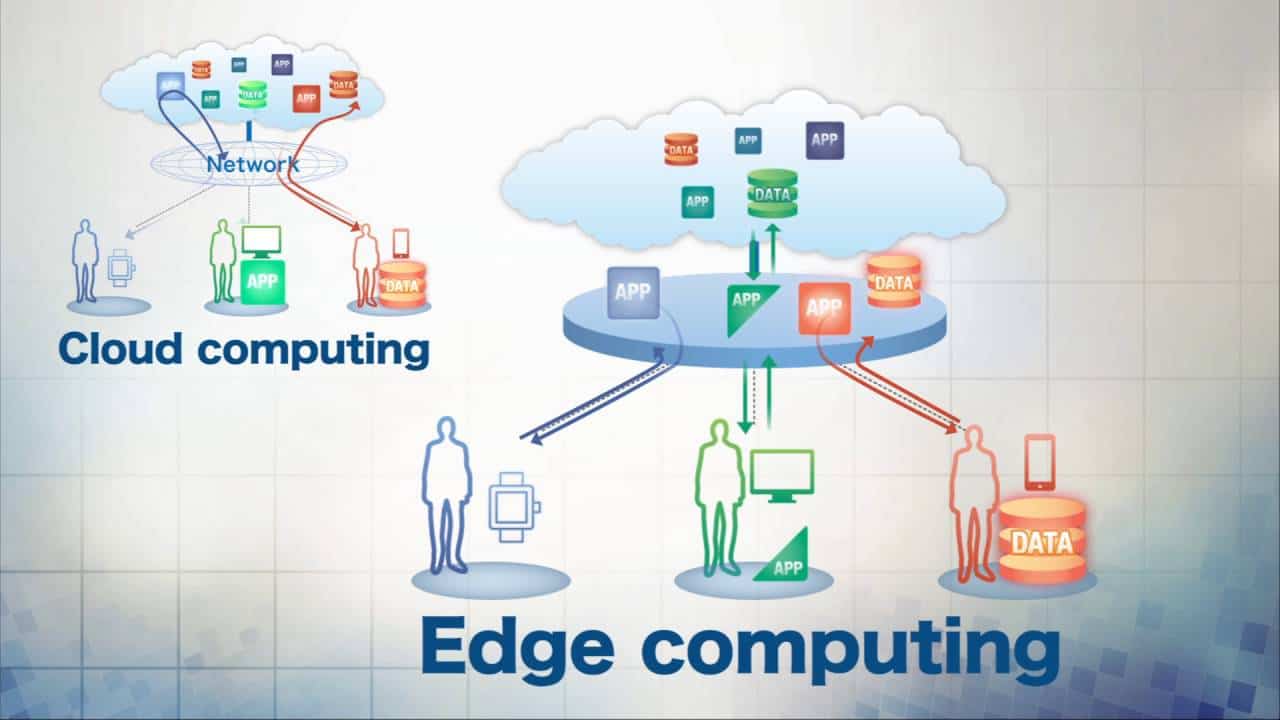

The word edge in this context means literal geographic distribution. Edge computing is computing that’s done at or near the source of the data, instead of relying on the cloud at one of a dozen data centers to do all the work. It doesn’t mean the cloud will disappear. It means the cloud is coming to you.

That said, let’s get out of the word definition game and try to examine what people mean practically when they extoll edge computing.

LATENCY

One great driver for edge computing is the speed of light. If a Computer A needs to ask Computer B, half a globe away, before it can do anything, the user of Computer A perceives this delay as latency. The brief moments after you click a link before your web browser starts to actually show anything is in large part due to the speed of light. Multiplayer video games implement numerous elaborate techniques to mitigate true and perceived delay between you shooting at someone and you knowing, for certain, that you missed.

Voice assistants typically need to resolve your requests in the cloud, and the roundtrip time can be very noticeable. Your Echo has to process your speech, send a compressed representation of it to the cloud, the cloud has to uncompress that representation and process it — which might involve pinging another API somewhere, maybe to figure out the weather, and adding more speed of light-bound delay — and then the cloud sends your Echo the answer, and finally you can learn that today you should expect a high of 85 and a low of 42, so definitely give up on dressing appropriately for the weather.

So, a recent rumor that Amazon is working on its own AI chips for Alexa should come as no surprise. The more processing Amazon can do on your local Echo device, the less your Echo has to rely on the cloud. It means you get quicker replies, Amazon’s server costs are less expensive, and conceivably, if enough of the work is done locally you could end up with more privacy — if Amazon is feeling magnanimous.

PRIVACY AND SECURITY

It might be weird to think of it this way, but the security and privacy features of an iPhone are well accepted as an example of edge computing. Simply by doing encryption and storing biometric information on the device, Apple offloads a ton of security concerns from the centralized cloud to its diasporic users’ devices.

But the other reason this feels like edge computing to me, not personal computing, is because while the compute work is distributed, the definition of the compute work is managed centrally. You didn’t have to cobble together the hardware, software, and security best practices to keep your iPhone secure. You just paid $999 at the cellphone store and trained it to recognize your face.

The management aspect of edge computing is hugely important for security. Think of how much pain and suffering consumers have experienced with poorly managed Internet of Things devices.