ARTIFICIAL intelligence is here to stay – and many users are hitting back at tech giants like Microsoft and Meta over data privacy concerns.

While the companies have taken note of the criticism, not all responses have been equally reassuring.

Microsoft

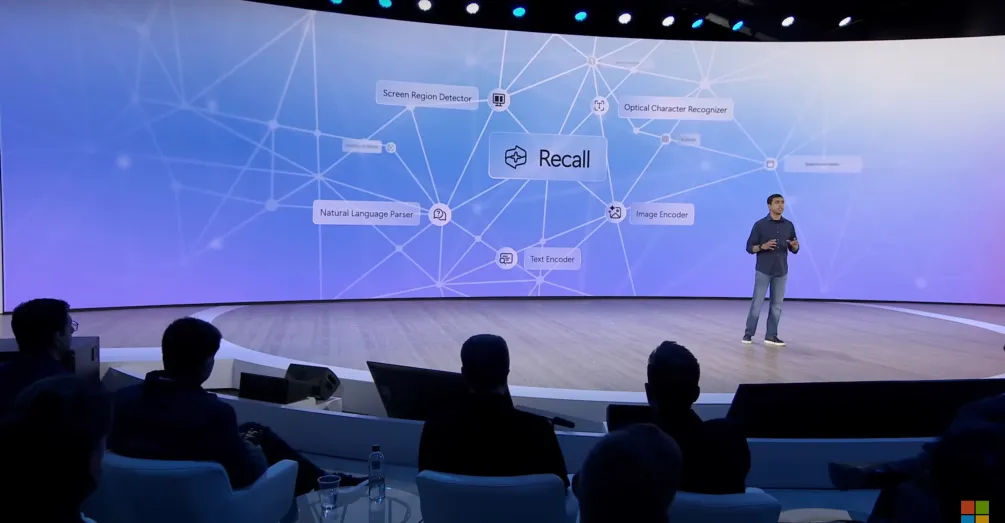

Microsoft was forced to delay the release of an artificial intelligence tool called Recall following a wave of backlash.

The feature was introduced last month, billed as “your everyday AI companion.”

It takes screen captures of your device every few seconds to create a library of searchable content. This includes passwords, conversations, and private photos.

Its release was delayed indefinitely following an outpouring of criticism from data privacy experts, including the Information Commissioner’s Office in the UK.

In the wake of the outrage, Microsoft announced changes to Recall ahead of its public release.

“Recall will now shift from a preview experience broadly available for Copilot+ PCs on June 18, 2024, to a preview available first in the Windows Insider Program (WIP) in the coming weeks,” the company told The U.S. Sun.

“Following receiving feedback on Recall from our Windows Insider Community, as we typically do, we plan to make Recall (preview) available for all Copilot+ PCs coming soon.”

When asked to comment on claims that the tool was a security risk, the company declined to respond.

Recall starred in the unveiling of Microsoft’s new computers at its developer conference last month.

Yusuf Mehdi, the company’s corporate vice president, said the tool used AI “to make it possible to access virtually anything you have ever seen on your PC.”

Shortly after its debut, the ICO vowed to investigate Microsoft over user privacy concerns.

Microsoft announced a host of updates to the forthcoming tool on June 13. Recall will now be turned off by default.

The company has continuously reaffirmed its “commitment to responsible AI,” identifying privacy and security as guiding principles.

Adobe

Adobe overhauled an update to its terms of service after customers raised concerns that their work would be used for artificial intelligence training.

The software company faced a deluge of criticism over ambiguous wording in a reacceptance of its terms of use from earlier this month.

Customers complained they were locked out of their accounts unless they agreed to grant Adobe “a worldwide royalty-free license to reproduce, display, distribute, modify and sublicense” their work.

Some users suspected the company was accessing and using their work to train generative AI models.

Officials including David Wadhwani, Adobe’s president of digital media, and Dana Rao, the company’s chief trust officer, insisted the terms had been misinterpreted.

In a statement, the company denied training generative AI on customer content, taking ownership of a customer’s work, or allowing access to customer content beyond what’s required by law.

The controversy marked the latest development in the long-standing feud between Adobe and its users, spurred on by its use of AI technology.

The company, which dominates the market with tools for graphic design and video editing, released its Firefly AI model in March 2023.

Firefly and similar programs train on datasets of preexisting work to create text, images, music, or video in response to users’ prompts.

Artists sounded the alarm after realizing their names were being used as tags for AI-generated imagery in Adobe Stock search results. In some cases, the AI art appeared to mimic their style.

Illustrator Kelly McKernan was one outspoken critic.

“Hey @Adobe, since Firefly is supposedly ethically created, why are these AI generated stock images using my name as a prompt in your data set?” she tweeted.

These concerns only intensified following the update to the terms of use. Sasha Yanshin, a YouTuber, announced that he had canceled his Adobe license “after many years as a customer.”

“This is beyond insane. No creator in their right mind can accept this,” he wrote.

“You pay a huge monthly subscription and they want to own your content and your entire business as well.”

Officials have conceded that language used in the reacceptance of the terms of service was ambiguous at best.

Adobe’s chief product officer, Scott Belsky, acknowledged that the summary wording was “unclear” and that “trust and transparency couldn’t be more crucial these days” in a social media

post.

Belsky and Rao addressed the backlash in a news release on Adobe’s official blog, writing that they had an opportunity “to be clearer and address the concerns raised by the community.”

Adobe’s latest terms explicitly state that its software “will not use your Local or Cloud Content to train generative AI.”

The only exception is if a user submits work to the Adobe Stock marketplace – it is then fair game to be used by Firefly.

Meta

Meta has come under fire for training artificial intelligence tools on the data of its billions of Facebook and Instagram users.

Suspicion arose in May that the company had changed its security policies in anticipation of the backlash it would receive for scraping content from social media.

One of the first people to sound the alarm was Martin Keary, the vice president of product design at Muse Group.

Keary, who is based in the United Kingdom, said he’d received a notification that the company planned to begin training its AI on user content.

Following a wave of backlash, Meta issued an official statement to European users.

The company insisted it was not training the AI on private messages, only content users chose to make public, and never pulled information from the accounts of users under 18 years old.

An opt-out form became available towards the end of 2023 under the name Data Subject Rights for Third Party Information Used for AI at Meta.

At the time, the company said its latest open-source language model, Llama 2, had not been trained on user data.

But things appear to have changed – and while users in the EU can opt out, users in the United States lack a legal argument to do so in the absence of national privacy laws.

EU users can complete the Data Subject Rights form, which is nestled away in the Settings section of Instagram and Facebook under Privacy Policy.

But the company says it can only address your request after you demonstrate that the AI in its models “has knowledge” of you.

The form instructs users to submit prompts they fed an AI tool that resulted in their personal information being returned to them, as well as proof of that response.

There is also a disclaimer informing users that their opt-out request will only opt them out in accordance with “local laws.”

Advocates with NOYB – European Center for Digital Rights filed complaints against the tech giant in nearly a dozen countries.

The Irish Data Protection Commission (DPC) subsequently issued an official request to Meta to address the lawsuits.

But the company hit back at the DPC, calling the dispute “a step backwards for European innovation.”

Meta insists that its approach complies with legal regulations including the EU’s General Data Protection Regulation. The company did not immediately return a request for comment.

Amazon

The online retailer caught flak after dozens of AI-generated books appeared on the platform.

The issue began in raised a year ago when authors spotted works under their names they had not created.

Compounding the issue was a rash of books containing false and potentially harmful information, including several about mushroom foraging.

One of the most outspoken critics was author Jane Friedman. “I would rather see my books get pirated than this,” she declared in a blog post from August 2023.

The company announced the new limitations in a post on its Kindle Direct Publishing forum that September. KDP allows authors to publish their books and list them for sale on Amazon.

“While we have not seen a spike in our publishing numbers, in order to help protect against abuse, we are lowering the volume limits we have in place on new title creations,” the statement read.

Amazon claimed it was “actively monitoring the rapid evolution of generative AI and the impact it is having on reading, writing, and publishing”.

The tech giant subsequently removed the AI-generated books that were falsely listed as being written by Friedman.

After the backlash, the company also changed its privacy policy to include a section about AI-generated books.

“We require you to inform us of AI-generated content (text, images, or translations) when you publish a new book or make edits to and republish an existing book through KDP,” the new rules read, in part.

Amazon uses the term “AI-generated” to describe work created by artificial intelligence, even if a user “applied substantial edits afterwards.”

Vendors are not required to disclose AI-assisted content, which Amazon defines as content you created yourself and refined using AI tools.

However, it’s up to vendors to determine if their content meets the platform’s guidelines.

Amazon also limited authors to self-publishing three books a day in what was seen as an effort to undermine the pace of AI content creation.